AI Usage

AI Usage in Kahf Products

We are building an advanced AI-driven image and video safety system that protects users from sensitive and inappropriate content. Our technology combines modern computer vision, on-device AI processing, segmentation, NSFW detection, and multi-layered moderation logic to deliver fast, private, and reliable content filtering across web, mobile, and server environments.

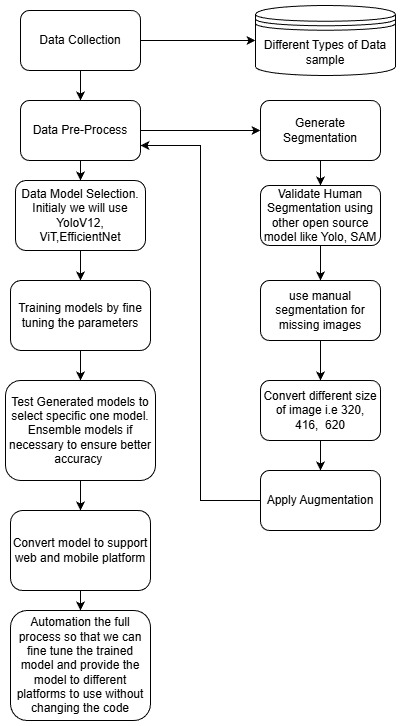

1. Kahf AI Training Pipeline

1. Data Collection

We curate a diverse dataset (>200k images) featuring:

- Male & female body types

- Cultural clothing (abaya, hijab, head coverings)

- Children

- Legs and partial bodies

- Multiple angles, lighting conditions, and occlusions

This ensures broad real-world coverage and balanced representation.

2. Data Preprocessing & Segmentation

Our preprocessing pipeline includes:

- Image cleaning and normalization

- Automatic segmentation using SAM/YOLO

- Cross-validation with multiple segmentation tools

- Manual corrections where needed

- Multi-size normalization (320, 416, 620)

- Robust augmentations (crop, flip, jitter, occlusion, scale)

This yields accurate segmentation masks for training high-performing models.

3. Model Selection

We experiment with several state-of-the-art architectures:

- YOLOv12 for fast detection/segmentation

- Vision Transformers (ViT) for high-quality classification

- EfficientNet for performance-optimized mobile use

4. Model Training & Fine-Tuning

Our training flow includes:

- Hyperparameter sweeps

- Multi-loss optimization

- Early stopping with validation sets

- Fine-tuning on real-world edge cases

5. Evaluation & Ensemble

Models are validated using:

- mAP for detection

- IoU/Dice for segmentation

- Class-wise precision/recall

When beneficial, we combine models via ensembling for improved accuracy.

6. Deployment Optimization

We convert and optimize models for:

- ONNX

- TensorRT

- TFLite

- Core ML

- WebAssembly

We apply quantization and pruning for low-latency, device-friendly inference.

7. Automated ML Lifecycle

Our system maintains automated workflows for:

- Data ingestion

- Annotation

- Training

- Evaluation

- Model export

- Deployment

This enables continuous improvement at scale.

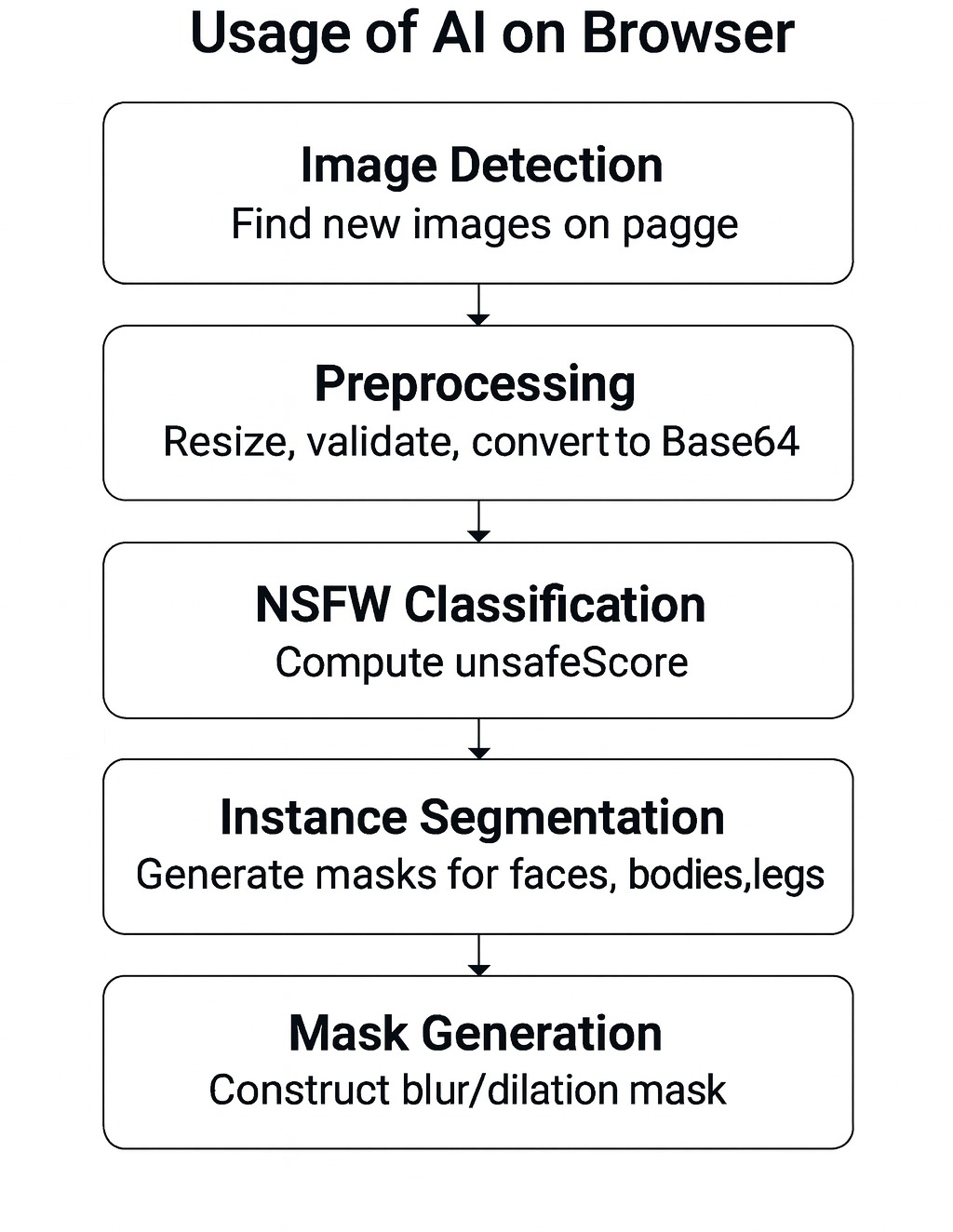

2. AI-Powered Image Moderation on the Kahf Browser

1. Image Detection

- DOM observers detect new images

- IntersectionObserver waits for images to enter viewport

- Offscreen pipelines prevent UI blocking

2. Preprocessing

- CORS-safe fetching

- Base64 conversion

- Size validation

- Resizing for inference

- MD5-based caching

3. NSFW Classification

- Resize → 224×224

- Normalization

- 5-class NSFW classifier

- unsafeScore = hentai + porn + sexy

- Threshold-based marking

4. Instance Segmentation

- Resize → 320×320

- Segmentation inference

- NMS filtering

- Detection of:

- Female body

- Male body

- Face

- Legs

5. Mask Generation Rules

- Blur female bodies

- Blur legs

- Blur female faces

- Male body rules configurable

- Apply dilation, smoothing, mask merging

6. Image Processing

- Extract frame → canvas

- Send base64 frame to model

- Receive bounding boxes

- Draw overlays for blur regions

- Update every 50 frames

3. Application Overview

Our system enables trusted browsing and platform-level safety by:

- Detecting bodies, genders, attributes, and visual categories

- Identifying NSFW and borderline content

- Applying culturally sensitive filtering rules

- Supporting multi-platform (browser, mobile, backend) use cases

- Delivering low-latency on-device inference

We continuously train, evaluate, and optimize our models to improve accuracy and coverage.

Fig2: Flow diagram of AI on browser.

4. Image + Video Moderation Using OpenAI

(Hikmah + Mahfil)

System Architecture

1. OpenAI Integration

- GPT-5 mini (Azure) for primary image analysis

- GPT-4o-mini for text processing

- Azure GPT-4 as fallback

2. Hikmah Moderation Engine

- Image moderation (AI + rule-based)

- Text moderation

- Frame-based video moderation

- REST API endpoints for platform integration

3. Mahfil Integration

- Lightweight moderation interface

- Thumbnail-based evaluation

- Uses same moderation rules & logic as Hikmah

Image Moderation

Image Moderation

- Convert image URL → base64

- GPT-5 mini evaluates content

- Azure content safety pass

- Rule-based classification:

- REJECT: explicit content, indecent clothing, severe self-harm

- REVIEW: violence, anti-Islamic visuals, mild adult content

- ALLOW: compliant media

Video Moderation

- Extract frames every 5–15 seconds

- Analyze each frame

- Aggregate results

- GPT-5 generates overall summary + moderation decision

5. Expected Outcomes

Our multi-model, multi-layered approach enables:

- Accurate human detection + segmentation

- Gender-aware and culturally sensitive moderation

- Real-time on-device image and video filtering

- Privacy-resistant browser-based AI

- High-performance web/mobile AI through optimized models

- A fully automated ML lifecycle for future expansion